On 12 May 2021, the UK Government finally announced the details of its long-awaited draft internet regulation. The Online Safety Bill, first proposed by Theresa May under the name Online Harms Bill, sets out strict guidelines governing the removal of illegal and harmful content such as child sexual abuse, terrorist material and media that promotes suicide. It is also the first time that online misinformation comes under the remit of a regulator.

The draft Bill, a joint proposal from the Minister of State for Digital, Culture, Media and Sport (DCMS) and Home Office, is to be scrutinised by a joint committee of MPs before a final version is formally introduced to Parliament.

Here is an overview of the draft Bill:

Duty of Care

Part 2 of the Bill introduces a duty of care on certain online providers to protect their users from illegal harms and legal but harmful content, and safeguard children from both illegal and legal but harmful content. The Bill regulates:

- user-to-user services, meaning services that allow users to upload and share user-generated content (UGC) such as Facebook, Instagram and etc; and

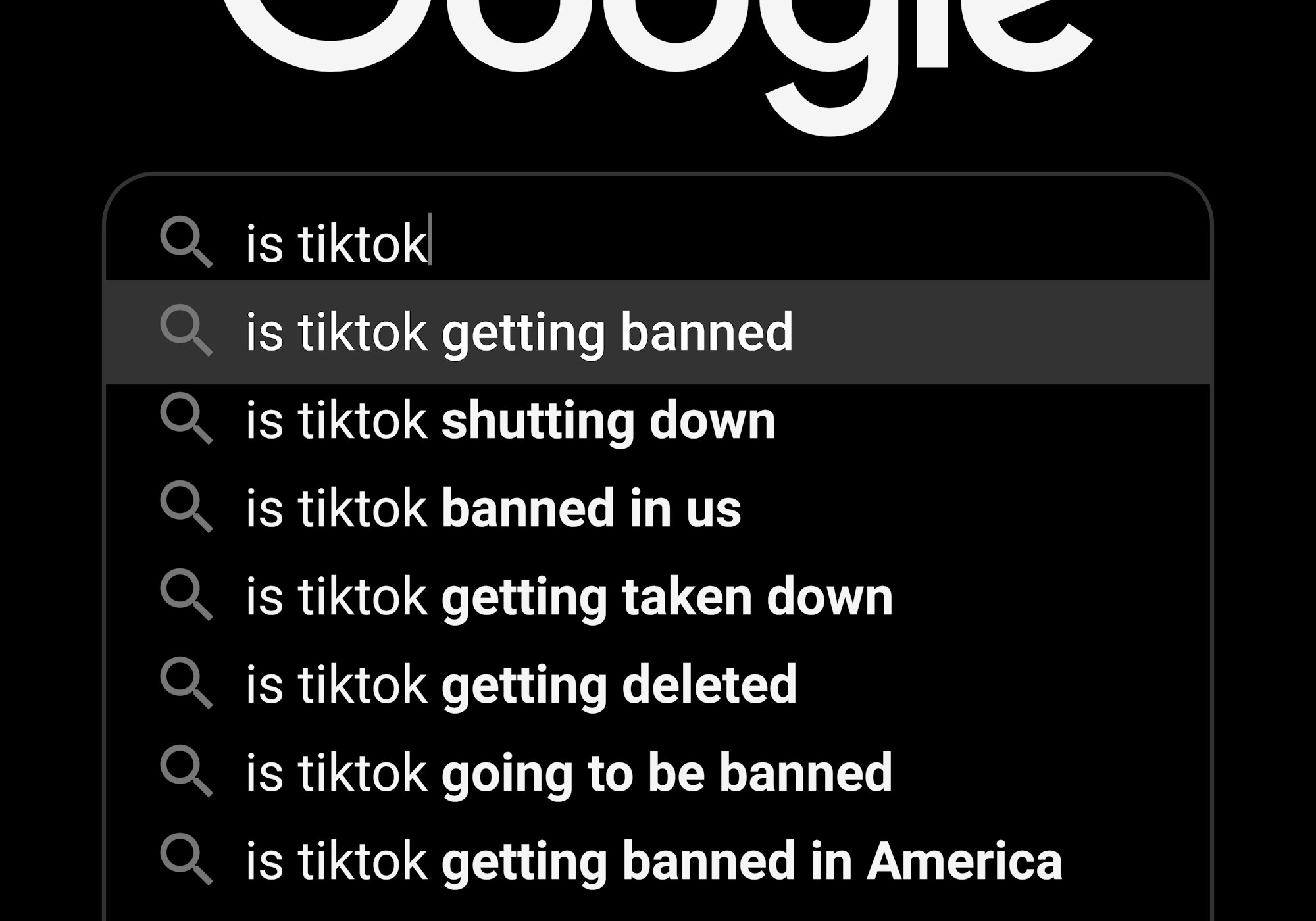

- search services, such as Google, Bing, Yahoo and so forth.

The Bill defines UGC as content:

- generated by a user of the service, or uploaded to, or shared on the service by a user; and

- that may be encountered by another user or users of the services when using such content sharing services.

The proposed statutory duty of care for regulated providers involves identifying, removing and limiting the spread of illegal or harmful content.

Carve-outs

Schedule 1 of the Bill provides a range of exempt services, including:

- where the only UCG is email, SMS, MMs and aural communication (phone/WhatsApp conversations);

- limited functionality services, such as comments, likes, reviews, votes and emojis;

- internal business functions; and

- public bodies.

Certain Online Providers

Businesses that fall within the scope of this Bill are categorised, and their duties determined, according to the number of users they have as well as in regards to their functionalities and risk of spreading harmful content. Category 1 is likely to encompass the most prominent social media companies, with the majority of other businesses being caught by Category 2. This includes Category 2A, businesses assessed by reference to the number of UK users they have, and Category 2B, regulated user-to-user service providers assessed according to the number of UK users they have and their functionalities. The threshold conditions for each category are listed in Schedule 4 of the Bill.

Extra-territorial Application

While the Bill will apply to the whole of the UK, it will also cover services based outside of the UK territory where users in the UK are affected.

Harmful Content

The definition of harmful content remains vague throughout the Bill. Section 45 provides that content is harmful to children if:

- the provider of the service has reasonable grounds to believe that the nature of the content is such that there is a material risk of the content having, or indirectly having, a significant adverse physical or psychological impact on a child of ordinary sensibilities; or

- there is a material risk of the fact of the content’s dissemination having a significant adverse physical or psychological impact on a child of ordinary sensibilities, taking into account how many users may be assumed to encounter the content by means of the service and how easily, quickly and widely it may be disseminated.

Section 46 sets out the criteria for assessing content harmful to adults. The difference is that this section makes a reference to an ‘adult of ordinary sensibilities’ instead.

Whether children or adults, what constitutes harmful content will be further elaborated by Codes of Practice and secondary legislation. However, there is particular emphasis on protecting children online and more onerous obligations on providers of user-to-user services ‘likely to be accessed by children’.

Codes of Practices and the Regulator

As the regulator, Ofcom will be required to produce Codes of Practice on aspects relating to compliance, terrorism and CSEA. It will also be required to maintain a register of Category 1 and 2 threshold service providers. Regulated providers who meet a specified threshold will be required to notify Ofcom and pay an annual fee.

Ofcom’s enforcement powers includes, among others, fining businesses up to £18 million or 10% of their annual revenue (whichever is greater) for non-compliance, issuing ‘use of technology’ warnings and notices requiring the use of particular technology for compliance purposes. The Bill also makes provisions for holding senior managers criminally liable for failure to comply with information requests.

What does it mean for businesses and users?

Businesses will have to put in place systems and processes to comply with these new duties, which comes at a cost.

The risk-based approach, transparency, reporting and record-keeping requirements will place a considerable compliance burden on regulated services. The Impact Assessment, published alongside the draft Bill, estimates that it will cost the industry over £346 million in regulator fees, £9.2 million to read and understand the legislation, and £14.2 million to update terms of services.

Ongoing compliance costs may reach close to £1 billion. This figure does not account for potential fines and the broader impacts, including freedom of expression and privacy implications, innovation and competition impacts, business disruption measures and the potential requirement for some businesses to adopt age verification measures.

Service providers trying to prepare for the impact of the new regulatory framework lack clarity at this stage. The Bill is vague as to what constitutes harmful content and to whom. This will only become clearer once further Codes of Practice are developed by Ofcom, and secondary legislation is issued.

Many users will likely experience disruption in their online activity even though the Bill makes express provisions to protect freedom of expression and privacy. Providers of both regulated user-to-user services and regulated search service providers will indeed find it challenging to strike the right balance between the competing duties. Besides, providers may feel inclined to adopt restrictive measures or cautiously remove content to mitigate the risk of paying heavy fines for failing to improve user safety in relation to different types of content.

The Bill also safeguards access to journalistic content and political speech, with both professional and citizen journalists falling outside the scope of the regulation. Again, it will be challenging for providers to draw a line between citizen journalists and bloggers.

Artificial Intelligence moderation technology may be deployed by providers in their efforts to meet their new online safety duty. Nevertheless, there is a risk of innocuous content being flagged as harmful as well as harmful content slipping past if the technology is unable to interpret contextual information.

All of this will likely make many users unhappy, riddle Ofcom with appeals, and land many disputes in court (whether it is a user against Ofcom, user against a provider or a provider against Ofcom).

Final words

Although the Bill will be subject to debate, it will likely progress smoothly considering the government’s majority in Parliament. However, assuming that the legislation will come into force with immediate effect, a range of measures will require secondary legislation and further clarification through several Codes of Practice.

It will not be before these further developments that will be able to answer what content is harmful and to whom, whether the legislation is workable and how much of an impact it will have.

Further reading

- Paul Lewis and the Financial Times’s Editorial Board tell us that the Online Safety Bill provides ‘little safety in it, for adults or children, from thieves’ and ‘misses fraud’s gateway’.

- Alex Hern from The Guardian articulates that ‘if content moderation was hard before, it could become almost impossible’.

- Mike Masnick notes in a blog post on Techdirt.com that requiring companies to remove lawful speech is ‘the very model used by the Great Firewall of China’.

References

DCMS, Draft Online Safety Bill (CP 405, 12 May 2021)

Home Office and DCMS, Online Harms White Paper – Initial consultation response (February 2020)

Home Office and DCMS, Online Harms White Paper: Full Government Response to the Consultation (Cm 354, 2020)

References – Further Reading

Alex Hern, ‘Online Safety Bill: A Messy New Minefield in the Culture Wars’ (The Guardian, 12 May 2021) <https://www.theguardian.com/technology/2021/may/12/online-safety-bill-why-is-it-more-of-a-minefield-in-the-culture-wars> accessed 23 May 2021

Mike Masnick, ‘UK Now Calling Its ‘Online Harms Bill’ The ‘Online Safety Bill’ But A Simple Name Change Won’t Fix Its Myriad Problems’ (Techdirt, 17 May 2021) <https://www.techdirt.com/articles/20210513/17462446801/uk-now-calling-online-harms-bill-online-safety-bill-simple-name-change-wont-fix-myriad-problems.shtml> accessed 23 May 2021

Paul Lewis, ‘Online Fraud: Let’s Close the Loopholes’ (Financial Times, 20 May 2021) <https://www.ft.com/content/eb70531a-8805-4085-9f82-acc50756225a> accessed 23 May 2021

The editorial board, ‘UK Online Harms Bill Misses Fraud’s Gateway’ (Financial Times, 16 May 2021) <https://www.ft.com/content/239c33ea-8d41-4e79-ab88-e53bf558a2cf> accessed 23 May 2021